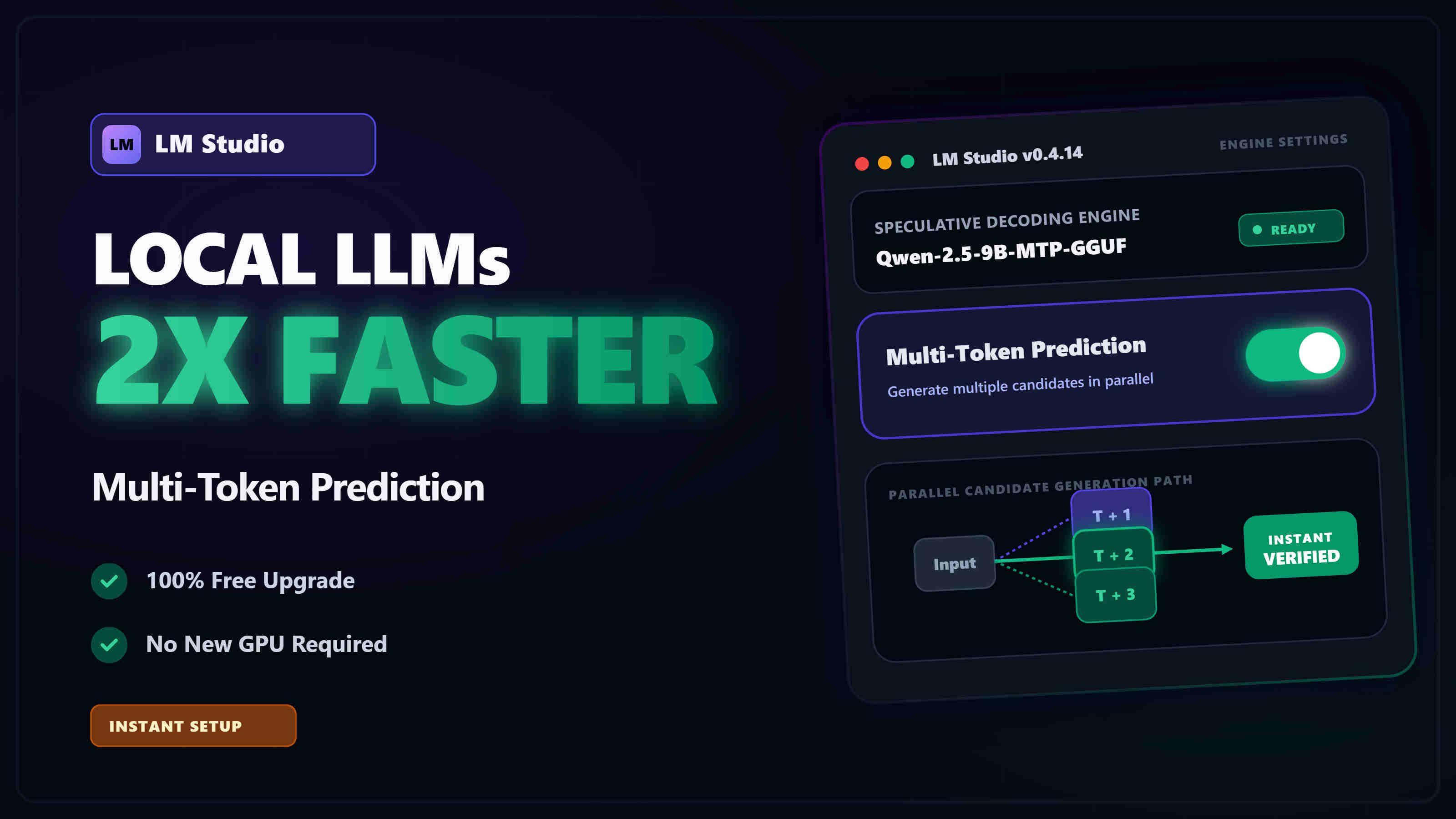

Multi-Token Prediction (MTP) LM Studio Tutorial - Boost tokens/sec

Learn how to significantly boost your local LLM generation speeds in LM Studio using Multi-Token Prediction (MTP) speculative decoding. This step-by-step tutorial covers everything from model selection to optimal parameter tuning.